TikTok, X warned by IMDA after failing to detect and remove child sexual abuse, terrorism content

Sign up now: Get ST's newsletters delivered to your inbox

Both X and TikTok have been issued with letters of caution that require them to provide regular updates on the roll-out of rectification measures.

PHOTO: REUTERS

SINGAPORE – Social media platforms X and TikTok have been warned after the local authorities found that they did not proactively detect and remove child sexual exploitation and abuse material and terrorism content, respectively.

On X, the number of cases of child sexual exploitation and abuse material originating from, targeting or featuring Singapore users more than doubled to 73 in 2025, from 33 the year before, said the Infocomm Media Development Authority (IMDA) on March 31.

On TikTok, 17 cases of terrorism content were shared by Singapore-based accounts in 2025. The content mostly involved videos with edited footage or audio related to transnational terrorist organisations, said IMDA.

“When some of these were reported to TikTok via its in-app user reporting mechanism, TikTok found that the content did not violate its community guidelines,” said IMDA. “This demonstrated that TikTok did not accurately assess the terrorism content when it was user-reported.”

The findings are part of the Online Safety Assessment Report 2025 – the second such annual report published by IMDA – which assesses how well six major social media platforms protect their users from harmful content.

The report analysed data gathered by IMDA and data from members of the public, public agencies and platforms’ online safety reports covering the period between April 1, 2024, and March 31, 2025. The assessment also included a “mystery shopper” test on the platforms’ user reporting and resolution mechanism.

IMDA has since issued X and TikTok with letters of caution, requiring the companies to be placed under enhanced supervision until the authorities are satisfied that the issues have been resolved.

During the enhanced supervision period, the platforms must provide regular status updates on the roll-out of rectification measures and demonstrate the effectiveness of the measures in their next annual reports to IMDA, due on June 30.

Should X or TikTok fail to show IMDA that they have improved, the authority said it will not hesitate to explore further options. These include a fine of up to $1 million under the Broadcasting Act.

Under the Code of Practice for Online Safety rolled out in 2023, Facebook, HardwareZone, Instagram, TikTok, X and YouTube are required to minimise Singapore users’ exposure to harmful content, defined as material that endangers public health or that is sexual, violent or related to self-harm, suicide or cyberbullying.

Terrorism and child sexual exploitation and abuse content are “very egregious harms”, said IMDA in its statement on March 31.

Both TikTok and X have accepted IMDA’s findings and committed to countering the harmful content through the use of better detection tools, among others. Human reviewers will also receive more training to better identify child sexual exploitation and abuse material and terrorism content.

X lags in overall online safety ratings

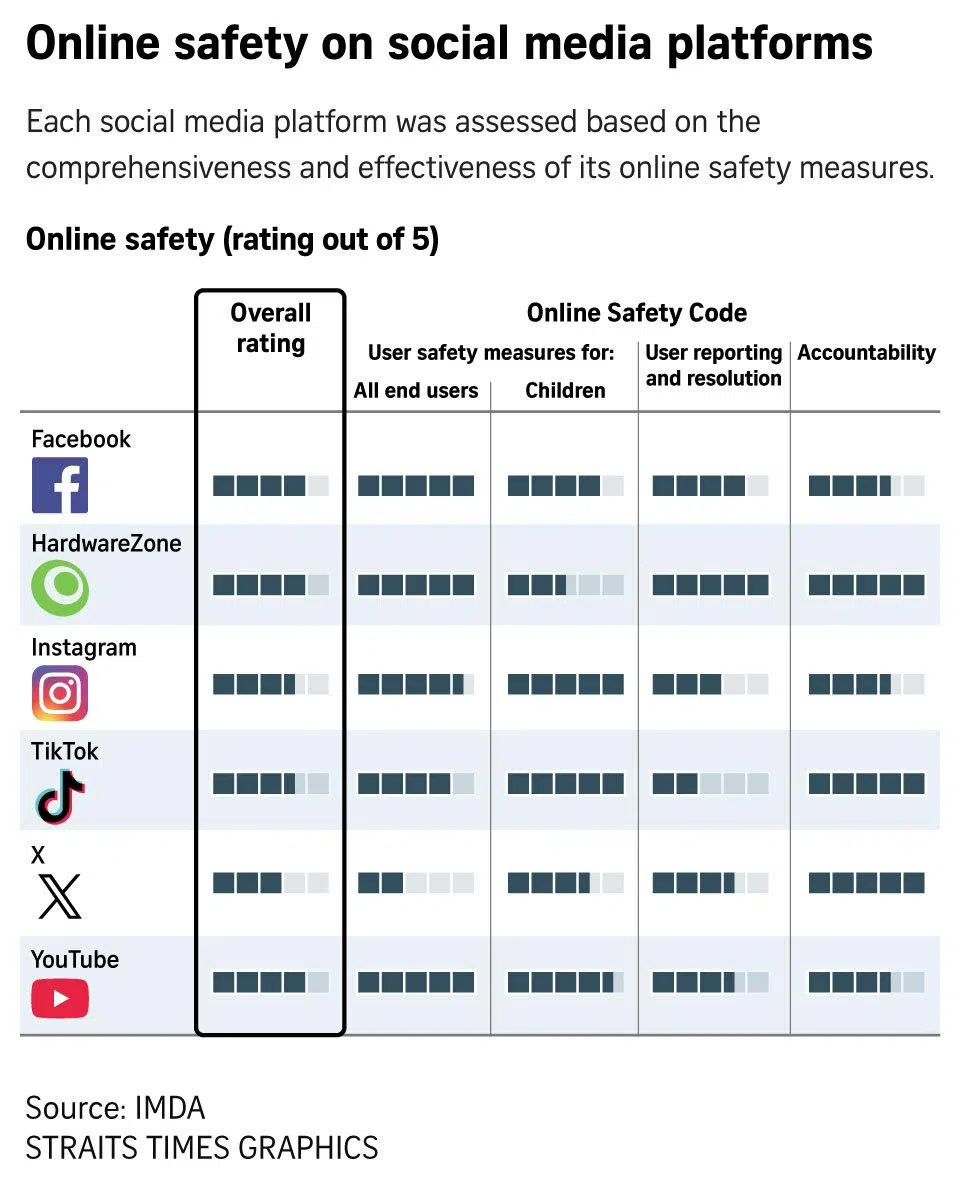

As part of the report, social media services were also rated for their efforts in four key areas: safety measures for all users, safety measures for under-18 users, user reporting and resolution, and accountability. Though Facebook, HardwareZone and YouTube were given the highest overall score of four out of five, there were weaknesses in their child safety measures.

For instance, IMDA detected a few instances where children’s accounts on Facebook and YouTube could still access age-inappropriate content such as nudity. However, the authorities noted that these cases were low in number, with no indication that it was a systemic issue on both sites.

TikTok led in overall ratings in the 2024 report, but came in joint second with Instagram in the 2025 report.

In particular, TikTok’s overall rating in 2025 was pulled down by poor performance in IMDA’s “mystery shopper” tests on its user reporting and resolution mechanism.

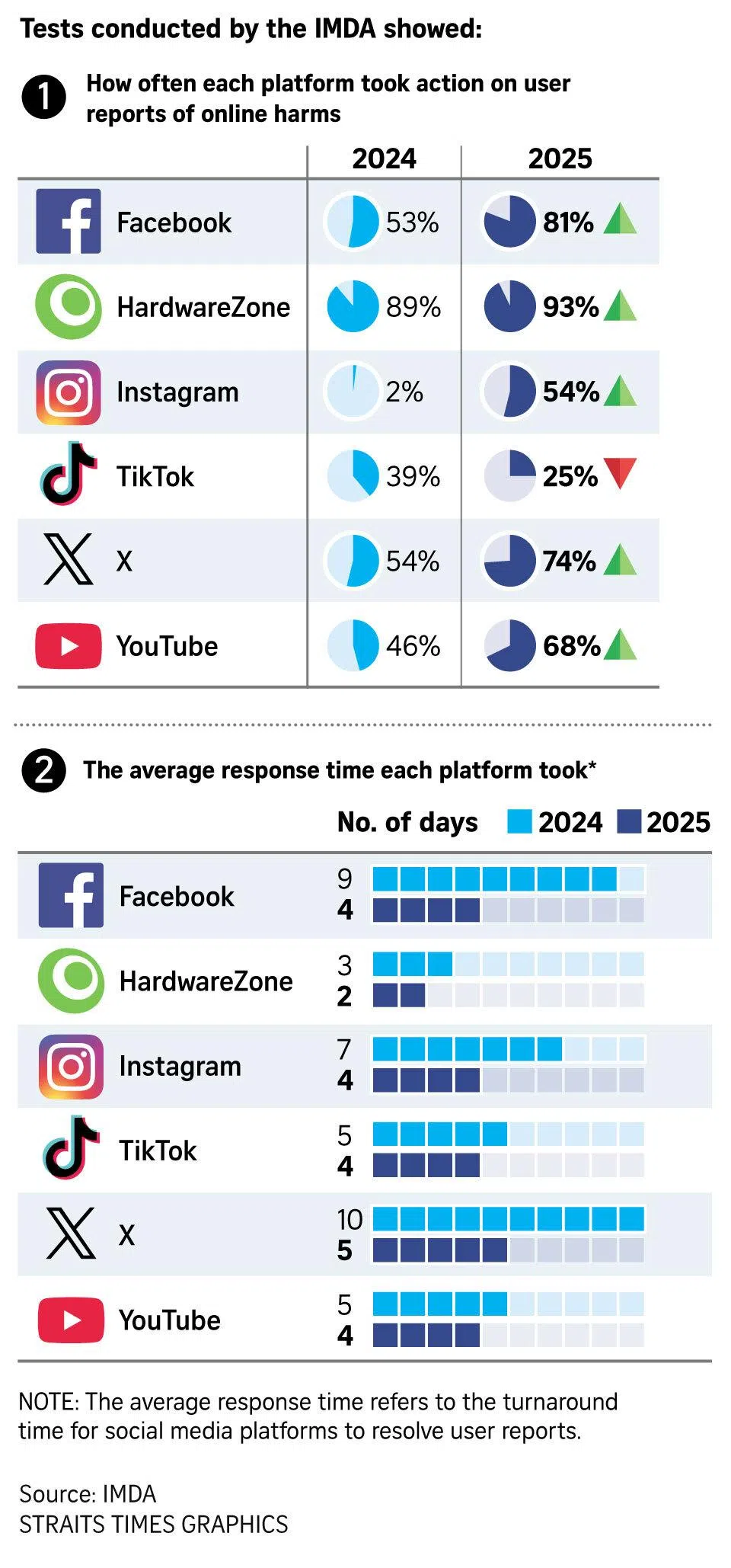

These tests involved IMDA analysts reporting more than 800 cases of harmful content across the six social media platforms through their in-app reporting mechanism, to observe the response from each platform.

TikTok took action on only a quarter of reported harmful content, down from 39 per cent in the previous report. It had the poorest showing across all six platforms in the mystery shopper tests.

X came in last in overall ratings with a score of three. It also had the lowest score on safety measures for all users, in part because of its weakness in proactively detecting and removing child sexual exploitation and abuse material.

“This is the second consecutive year that IMDA has detected child sexual exploitation and abuse material on X, despite informing X of this issue in 2024,” said the report.

In response to IMDA’s findings, X said it has zero tolerance for child sexual exploitation and abuse material, and that it had suspended 17,800 Singapore accounts for such violations during the reporting timeframe.

“Our mission is to promote and protect the public conversation, and ensuring the safety of our users is our top priority,” said X. “X users have the right to express their opinions and ideas without fear of censorship, while we uphold our responsibility to protect them from content that violates our rules.”

The average timeframe for social media services to take action on user reports has decreased across the board, according to the “mystery shopper” tests done by IMDA.

Notably, the average time taken for Facebook to act on harmful content was four days in 2025, down from nine days in 2024. X also halved its average time taken to five days, down from 10 days.

Overall, sexual, violent and cyberbullying content were among the top three types of harmful content in Singapore that was proactively removed by designated social media services, or as a result of user reports.

“(Our) main priority as Singapore’s online safety regulator is to ensure a safe online environment for users in Singapore and to protect children, in particular, from harmful content,” said IMDA.

“While IMDA adopts a collaborative approach to engage with designated social media services, we will hold (them) accountable when we assess that their online safety measures do not adequately achieve the outcomes of the code.”

The Code of Practice for Online Safety works with the Code of Practice for Online Safety for App Distribution Services to rein in harm. The latter requires app stores to screen and prevent minors from downloading age-inappropriate apps from April 1.

By June, a new Online Safety Commission will also start issuing directions to disable users’ access to harmful content, or restrict the perpetrators’ online accounts, upon receiving reports of harassment, intimate image abuse, doxing or deepfakes.

Across the world, some countries have banned underage users from downloading social media apps. In December, Australia became the first country in the world to ban under-16s from using TikTok, X, Facebook, Instagram, YouTube and Snapchat.

In March, a jury in New Mexico in the US found Meta liable for misleading users about the safety of its platforms and failing to protect children from exploitation. The tech giant has been ordered to pay US$375 million (S$483 million) in civil penalties. Meta said it would appeal against the verdict.

A week ago, Meta and YouTube were also ordered to pay US$6 million in damages to a woman in the US, after the platforms were found to have caused her harm because of the addictive design of their platforms. Both companies said they would appeal against the decision.

Singapore has plans to extend age assurance requirements to designated social media services. Discussions are ongoing.