ScienceTalk

Spotlight on trust in science of Covid-19

Grave errors can be missed when medical journals publish reports generated hastily

Sign up now: Get ST's newsletters delivered to your inbox

An article in The Lancet suggesting hydroxychloroquine raised the risk of death in coronavirus patients was retracted last week.

PHOTO: AGENCE FRANCE-PRESSE

Within a few hours of each other last week, two of the world's most prestigious medical journals - the New England Journal of Medicine (NEJM) and The Lancet - each retracted an article about treatment of patients with Covid-19.

The Lancet publication had suggested that hydroxychloroquine (HCQ) increased the risk of death in coronavirus patients.

Since the findings of both studies could not be substantiated by the authors, the papers were retracted.

But much damage had already been done.

Following the paper, the World Health Organisation issued a global warning about using HCQ and stopped recruitment in its Solidarity trial for the drug - in which hundreds of hospitals across several countries have enrolled patients to test possible treatments.

Several national health authorities followed suit.

HCQ and its older version, chloroquine, have been around for many years, the latter since the 1950s.

They are used as prophylaxis (prevention) for malaria and to treat systemic lupus erythematosus, a chronic inflammatory disease.

The medicine is on the World Health Organisation's list of essential medication. So the risk-benefit ratio is well in favour of using HCQ and it would take a lot to change that perception.

So how did the world's two most prestigious medical journals make such a fatal mistake?

Since HCQ could theoretically be used both for the treatment of Covid-19 patients and to prevent infection in healthy individuals, the world is understandably hungry for data to guide decision-making for this drug, both in hospitals and in the community.

The Recovery trial, a large United Kingdom-based trial investigating potential coronavirus treatments, has stopped including HCQ in its study due to there being "no evidence of benefit", researchers announced on June 5.

Other arms of the trial, which has enrolled more than 11,000 patients from 175 hospitals across Britain, continue.

In Singapore, both the National Centre for Infectious Diseases and National University Hospital have largely stopped using HCQ to treat Covid-19 patients.

As for the possibility of its ability to reduce the risk of infection, a Minnesota prevention trial using HCQ was published in NEJM last week, showing no benefit to high-risk healthcare workers.

So where does this leave us?

While both studies demonstrate that using HCQ is safe, neither supports using it in the fight against the virus.

Still, whether HCQ is useful in preventing the disease is likely undetermined so far, as the NEJM study is small and few people developed the disease. We are still awaiting good research and trials based on good data, to determine whether HCQ is effective.

PEER REVIEW PROCESS

To better understand how science can go wrong, one must be familiar with the process of the world of publications and dissemination of scientific work.

All papers are reviewed and scrutinised in detail by peers - scientists with similar expertise - in a process known as peer review.

This is an unpaid and unrecognised job that serves as a bastillion to ensure high-quality work is published in the best, most transparent and fairest way.

This is often done in several permutations before the final work is accepted and published.

But all this takes time.

The average time taken from submission to acceptance of a paper is two to three months.

It often takes even longer for more prestigious journals.

When Covid-19 struck the world with a vengeance, the scientific community scrambled to describe the virus, the disease and possible treatments and outcomes. The world was hungry for information and there was a substantial dearth of it.

The first papers describing the disease appeared in early January.

Since then, the rate of publication has been almost exponential.

Close to 25,000 papers have now been authored about Covid-19, describing everything from the virus' genetic structure, host immune response and clinical presentation to mortality figures and healthcare responses to the pandemic.

This is an astounding number of papers published in a remarkably truncated time, considering that this involves doing the actual research, preparation of the paper, institutional review board submission and approval and, finally, submission and acceptance of the paper with peer reviews.

Part of this process would involve checking the quality of data sets and appropriateness of the analysis done. And that is where the two retracted papers fell short.

Historically, researchers would have collected their own data, but recently, third-party data is being increasingly used.

In both retracted papers, the authors had relied on data from a third party, Surgisphere, which provided dubious data and refused to put it up for scrutiny, ultimately leading to the retractions.

COVID-19 SHORTCUTS

An average paper conceived in pre-pandemic times would not have been fast-tracked by host institutions and journals.

For 25,000 papers to be published in such a short period of time, something would have to give, leading to shortcuts in the usual well-established processes.

To make this possible, institutions have streamlined administrative approvals, dedicated grant funding became readily available, and the journals created fast-track submission to acceptance pathways.

Many journals, including The Lancet, created special Covid-19 sections to allow for fast dissemination of findings.

Journals depend on readership and citations of their articles. Thus, the first journal to publish a breakthrough or a unique finding gets more headlines and more citations.

In the age of Covid-19, many prestigious journals have published work they would not normally accept.

In the rush to save lives, the haste is understandable.

But the collateral damage is the quality and reproducibility of work, substantial overlap of findings and, at worst, results which are wrong.

A common statement at the end of an article is: "These findings would need confirmation by others."

We know this to be true as the ordinary reproducibility of highly regarded science is unfortunately low. It is at times referred to as a reproducibility crisis, where as many as 50 per cent of scientists report an inability to replicate other scientists' work.

This simply reinforces the universal knowledge that all data needs validation because of inherent reproducibility issues.

It is important to recognise that inability to reproduce a finding does not mean the authors have cheated, misled or misrepresented their data.

However, it is a red flag pointing to the complexity of scientific work where variations in reagents, cell cultures, animals, patient population and sample size can all lead to distortions of the true effect.

When the world is in dire need of information about Covid-19 and journals are publishing reports that are generated hastily, grave errors can be missed.

This is precisely what happened in the case of The Lancet, when a world-leading medical journal rushed to publish work written by authors from one of the world's most prestigious medical universities.

The end result: Lives are put at risk and there is reduced trust in science, at a time when we need it the most.

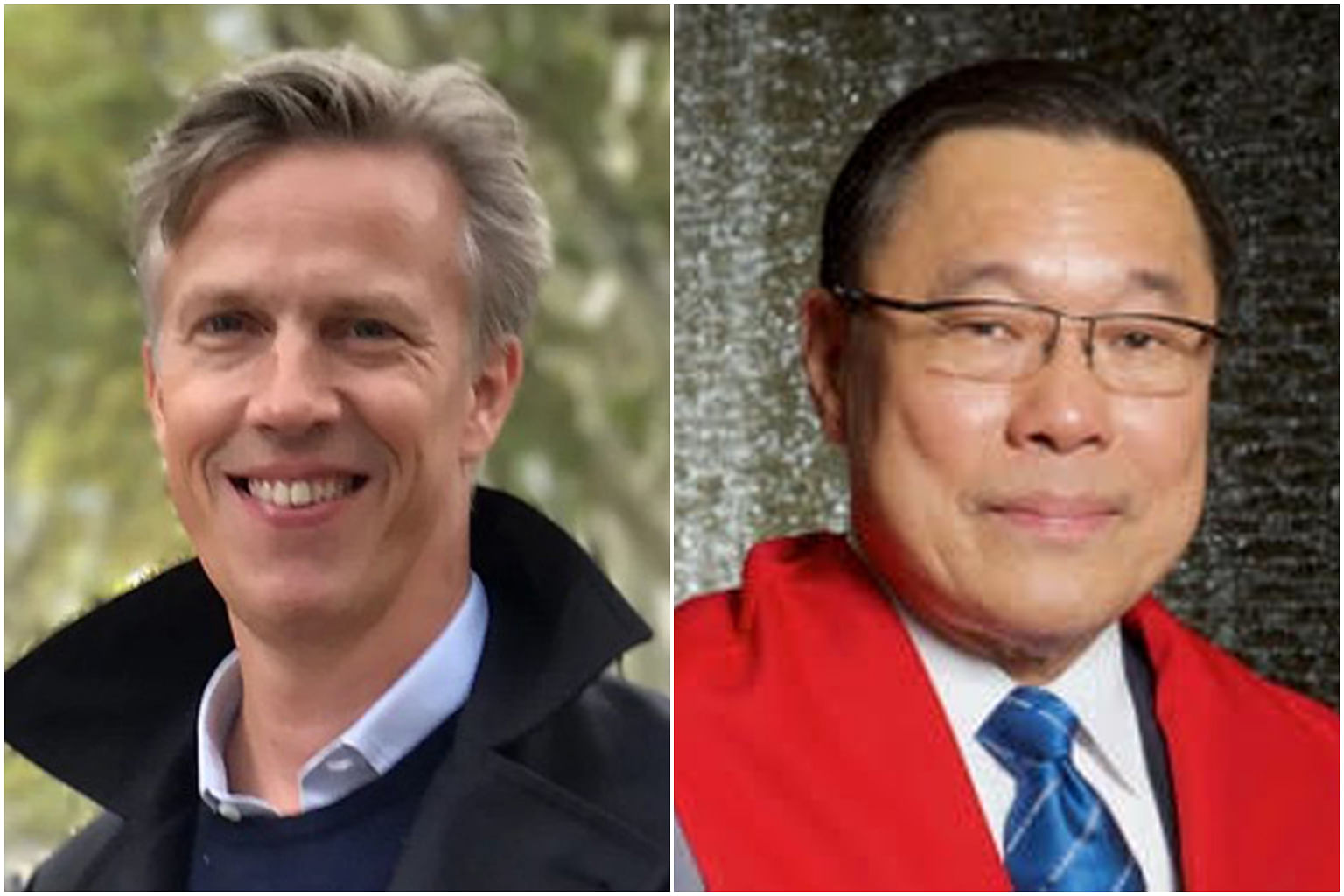

About the writers

Associate Professor Mikael Hartman is a senior consultant and head of the division of general surgery at the National University Hospital. He is also programme lead of the Breast Cancer Prevention Programme at the National University of Singapore's Saw Swee Hock School of Public Health.

Professor Lee Chuen Neng is the Abu Rauff Professor in Surgery and professor in engineering at NUS. In addition, he is clinical director of the Institute for Health Innovation and Technology at the university, and a cardiothoracic surgeon at the National University Hospital.