University rankings: Double-edged sword?

Sign up now: Get tips on how to help your child succeed

The topic of university rankings remains a hot one, given the Singapore psyche of tracking global rankings as a method of validation.

ST PHOTO: MARK CHEONG

SINGAPORE - University ranking season is back once more, but the annual exercise has come under a cloud this year, even as Singapore’s institutions continue to do well in the global league tables.

Yale and Harvard law schools’ decision on Thursday to cease being part of an influential rankings list followed an earlier controversy where another top US university admitted to submitting inaccurate data, reopening debate over the real-world utility of university rankings.

Insight looks at why college rankings continue to be closely watched by everyone from administrators to prospective students, and what is being done here to widen the metrics by which universities are judged.

A growing influence despite controversies

Last week, two of the most reputable law schools in the world said on the same day that they would stop participating in an annual rankings exercise, charging that the methodology being used was profoundly flawed.

Harvard said the US News and World Report’s law school rankings rely on a student debt metric that incentivises schools to admit better-off students who do not need to borrow, while pushing them to use financial aid to attract high-scoring students, rather than on those with greatest need.

Yale, meanwhile, said the rankings penalised schools that encourage their students to pursue public service careers over higher-paying jobs by characterising them as “low-employment schools with high debt loads”, which it slammed as a backward approach.

While these are potential red flags of perverse incentives for schools trying to climb the leaderboards, Columbia University admitted in September that it had submitted inaccurate data to US News, after a member of its faculty questioned its meteoric rise up the rankings.

The Ivy League institution said it had previously provided incorrect undergraduate class size and faculty qualifications data and would sit out this year’s rankings submissions. That did not stop US News from still ranking the school, which fell from 2nd to 18th place.

The topic of university rankings – and whether local institutions rise or fall every year – remains a hot one, given the Singapore psyche of tracking global rankings as a method of validation – whether of its airport or something more intangible like the city state’s business competitiveness.

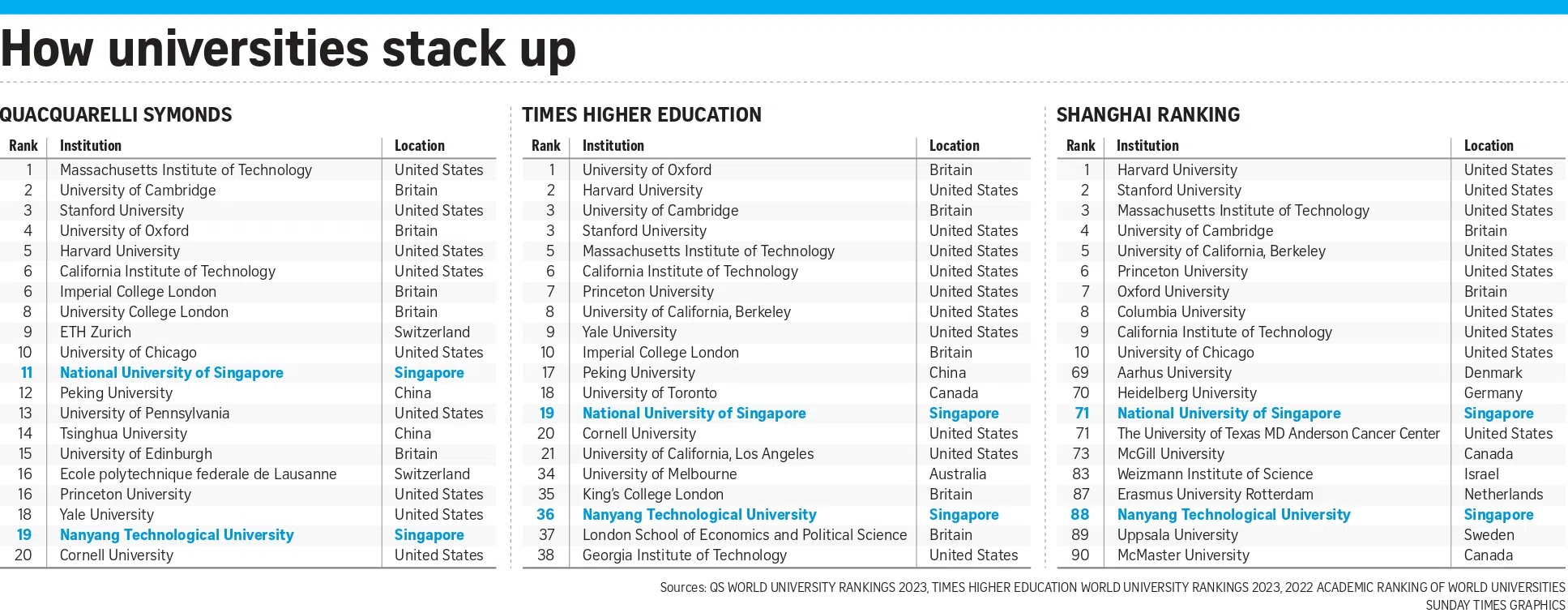

News last month that the National University of Singapore (NUS) and Nanyang Technological University (NTU) continued to progress up the Times Higher Education (THE) World University Rankings drew collective cheer online, while reports last week that the two universities slipped in the latest Quacquarelli Symonds (QS) ranking of Asia’s universities were met with angst in some quarters.

This is despite such rankings paying limited or no attention to criteria such as quality and innovation in education, or how a university enriches the local community, said Emeritus Professor Arnoud De Meyer, who was previously president of the Singapore Management University (SMU).

“I don’t believe at all in rankings that use opinions or perceived standing in the academic community as a major input,” he said – a metric used by both THE and QS.

The debate over whether local universities are too focused on such rankings over other measurements is not new, but is also not a settled question.

In 2019, NUS and NTU defended their promotion and tenure policies as robust, following criticism by some academics that the universities’ recruitment schemes were an “escalating arms race” to pursue talent that could help them reach greater heights in university league tables.

While such concerns have receded, a new arms race is afoot that speaks to the growing influence of university rankings among economies seeking to attract top talent.

Britain’s new visa scheme announced earlier this year grants “high-potential individuals” permission to stay there for up to two years – three if they have a PhD or doctorate. They are defined as graduates from universities that are in the top 50 of at least two of the three main global university rankings.

Last month, Hong Kong rolled out a similar scheme, whereby graduates from the world’s top 100 universities who have work experience can apply to stay in the territory for two years “to explore opportunities”. There is no quota for the scheme’s initial run.

In Singapore, Manpower Minister Tan See Leng said in October that his ministry is reviewing which international rankings it would rely on to determine the list of top 100 universities under a “top-tier” category in the Complementarity Assessment Framework, to be used by employers to select high-quality foreign professionals.

Professor Hiroshi Ono of Hitotsubashi University Business School in Tokyo told Insight that the rankings are extremely influential, if not too influential.

“The rankings exist because they are convenient – if we wanted to know the true worth of a university, it would take a lot of time, money and energy to investigate,” said Prof Ono, who has researched and written about the impact of ranking systems.

“Individuals and employers simply don’t have the resources, so they resort to ‘cheap’ signals as a way to assess the value of a university.”

News last month that NUS and NTU continued to progress up the Times Higher Education World University Rankings drew collective cheer online, while reports last week that the two universities slipped in the latest Quacquarelli Symonds ranking of Asia’s universities was met with angst in some quarters.

ST PHOTOS: GAVIN FOO, KELLY HUI

Rankings have their place: Local universities

Researchers said the problem is that most rankings rely on indicators that are, at best, proxies for quality.

Given the notorious difficulty of measuring universities of different sizes on a global scale, indicators – such as staff-to-student ratios and alumni with Nobel prizes – tend to be poor proxies in the bid to measure different aspects of quality, said research policy manager Elizabeth Gadd of Loughborough University in Britain.

“Teaching and learning is a principal aim of all higher education institutions, and yet has no bearing on an institution’s rank,” she wrote in the Frontiers research journal in September last year.

Anyone seeking to rely on such rankings should also take care to find out their methodologies, said Professor Peter Coclanis, who is the director of the Global Research Institute at the University of North Carolina at Chapel Hill in the US.

For instance, some surveys are biased towards older, larger and more established institutions, while relying on questionable measurements such as reputational surveys, which ask academics which schools they believe put out the best research, said Prof Coclanis.

Prof De Meyer said the current way of measuring research through the number of publications and citations also favours the hard sciences and medicine. This means universities without medical schools are often disadvantaged in most rankings, he added.

And there is evidence that rankings do shape universities’ behaviour. Prof De Meyer said many business schools have guided their faculty to publish in the 50 journals that the Financial Times uses in its ranking of Master of Business Administration programmes to measure research output.

As a consequence, these universities tend to pursue the same cookie-cutter approach to research and teaching, and risk becoming relatively similar, he said.

On their part, the local universities said rankings have their place as an indicator of a university’s performance, but should in no way be taken as the final word on the matter.

20Nov_insight

ST ILLUSTRATION: MANNY FRANCISCO

Professor Bernard Tan, NUS’ senior vice-provost (undergraduate education), said rankings provide an independent assessment of a university’s standing that is grounded in a chosen methodology.

But they do not capture other useful information such as the quality and employability of graduates, student and campus life, the variety of financial aid schemes, opportunities for global exposure and entrepreneurial culture, among other aspects.

An NTU spokesman said rankings provide a useful reference for a young university to compare its development and progress with that of centuries-old, established universities. However, they may not fully measure the unique strengths and missions of a university in serving its communities and society, he added.

SMU president Lily Kong said commercial university rankings do not give sufficient attention to areas such as lifelong learning and, in Singapore’s context, the important role universities play in public service.

“Rankings draw attention because of the seduction of simplicity, where a reductionist digit is supposed to tell much about a university,” she said. “The Singapore Ministry of Education (MOE) did away with school rankings for good reason, and those reasons ring true for university rankings too.”

In response to queries, both QS and THE said their rankings provide independent, data-driven insights that help students compare universities across countries despite the different frameworks used by different nations to assess higher education.

The rankings also support policymaking, such as in immigration and scholarships, said Mr Phil Baty, THE’s chief knowledge officer.

QS rankings manager Andrew MacFarlane said rankings are being increasingly leaned on because they help universities differentiate themselves and attract students in a fiercely competitive and noisy market.

Both firms also said they have strict quality assurance processes to ensure the integrity of data used in their rankings. While their main rankings compare larger varsities, the diversity of universities is recognised through other rankings with more specific aims.

Augmenting rankings

For its part, Singapore has been looking into building its own system to measure how much universities here are meeting society’s needs, to shore up the commercial rankings’ shortcomings and blindspots.

In June, a group of experts appointed by MOE to advise the Government on higher education issues recommended that autonomous universities (AUs) here work with international partners to develop a more holistic set of metrics.

Such a system should go beyond “one-size-fits-all international rankings” to capture the distinct purpose and mission of each university here, they said.

The International Academic Advisory Panel, consisting of eminent academics and corporate leaders, also advocated that institutions explore new ways for faculty to keep pace with industry developments, and strengthen experiential and multidisciplinary learning for students, among other suggestions.

In essence, the panel was echoing a recommendation it had made at its previous meeting in 2018, for there to be an evaluation framework that better measures how universities here have met national priorities, as a complement to existing rankings.

Then Education Minister Ong Ye Kung had said in response that he heard the call for each university to be evaluated on its distinctive objectives – such as in teaching, fostering lifelong learning, research, or enterprise – and that MOE was studying the issue with the AUs.

In September, Education Minister Chan Chun Sing said that local universities have broadened their assessment frameworks beyond published research to recognise faculty who have made an impact in areas such as public policy and industry, and to consider these contributions for promotion and tenure decisions.

Independent external validators are also engaged to assess the quality of teaching and research that is done in Singapore’s universities, Deputy Prime Minister Lawrence Wong said in 2020.

In response to queries seeking information on the evaluation frameworks and external validators, MOE said it takes a long-term and holistic perspective when assessing the impact of Singapore’s six AUs.

“The ministry is not distracted by rankings based on any partial set of metrics,” said an MOE spokesman.

“We recognise that each AU offers a distinct proposition to Singapore and the world – in areas such as teaching and learning, student and faculty development, research impact, industry partnerships, lifelong learning, and deepening the appreciation of our unique national context.”

MOE works closely with the AUs as part of their continual efforts to improve, added the spokesman. These include periodic assessments by independent panels, which provide recommendations for improvement.

Mindsets decide importance

Human resource experts said a majority of employers today no longer refer to university rankings in assessing job applicants, though there are holdouts.

Fortune 500 companies may have higher expectations for those who join them, said Ms Jaya Dass, managing director of permanent recruitment in the Asia-Pacific at Randstad. “So for these top-tier firms, ranking and university names will matter, as well as connections to alumni and networks.”

But most firms are more concerned about the competencies of job applicants rather than the pedigree of their degree.

“Statutory boards and government agencies are more pro-local universities when they are hiring, unless it’s a highly ranked foreign university, although this is also changing,” she said.

Dr David Leong, managing director of Peopleworldwide Consulting, said among the hardest things to evaluate – which rankings are no help in – are trustworthiness and personal values.

A case in point is that of the 11 trainee lawyers who cheated in the Bar examination, he said. “They come from good schools and universities, but values and ethics cannot be closely examined in a few rounds of interviews.”

Anecdotally, students whom Insight spoke to said they looked at rankings in a more nuanced way, as part of a suite of factors rather than in isolation.

Mr Samuel David, 25, who is enrolled at NUS, said he had considered the university’s overall reputation and ranking when deciding where to study. He is currently completing a bachelor’s degree in social science (social work) and a master’s in public policy.

He also looked at how it fared in the specific subject area that he was planning to study. “NUS is ranked fourth in the world for social policy in the QS ranking, and that must mean that it has some expertise and experience in that field,” he said.

But Prof Ono said initiatives such as Britain’s new visa programme seem to send signals that university rankings continue to be a crutch.

Singapore’s similar move to use a list of top 100 institutions to determine criteria for employment reinforces his position that employers and governments have become increasingly lazy and unwilling to make the extra effort to know applicants’ true abilities, he added.

“By using the list of top schools as a screening device, employers and governments are feeding into the social reproduction of elites. Universities that are included in the list can congratulate themselves, students will aim for the universities on the list, and the list will become a self-reinforcing mechanism, independent of people’s true abilities,” he said.

In a nutshell, whether countries can wean themselves – and their people – from an obsession with rankings will depend on a broader mindset shift, as well as a commitment to policies that do not prize such league tables and inadvertently signal their continuing importance.

If not, the end of the race for credentials will remain a long way off, said Prof Ono.