AI disinformation tests South Korean laws ahead of local elections

Sign up now: Get insights on Asia's fast-moving developments

Workers searching for fake content through social media related to election campaigns at the headquarters of the National Election Commission in Gwacheon, South Korea, on April 16.

PHOTO: AFP

GWACHEON, South Korea – In an airy office in South Korea, workers comb through social media, uncovering AI-generated content whose growing sophistication is testing toughened election laws ahead of local polls.

Experts warn that cheaper, more advanced artificial intelligence models are driving the global spread of online disinformation – a major concern in South Korea, which has adopted AI particularly rapidly.

The government strengthened the law in 2023 to counter the misuse of AI around elections, and has hired hundreds of staff to track and counter manipulated content ahead of local ballots on June 3.

But some say they feel like they are fighting an uphill battle.

“We can literally see how fast this technology evolves – like how each new version of AI makes videos and audio look and sound even more convincing,” disinformation monitor Choi Ji-hee said.

“Our job keeps getting harder and harder,” she told AFP at the National Election Commission (NEC) headquarters in Gwacheon, just south of Seoul.

On a recent workday, Ms Choi and 18 colleagues clicked through Instagram, YouTube and other platforms, as well as online chatrooms and “fan clubs” for local politicians, in search of content concocted by AI.

Recent finds include a fake TV news report claiming a mayoral candidate had made Time magazine’s list of rising political leaders, and a slick, AI-produced K-pop song praising a politician while mocking his rivals.

Once the authorities confirm the content is the work of AI, the authorities can demand its removal and issue harsh punishments, including jail time in extreme cases.

In one corner, workers discussed how to dissect a suspicious video, mulling over whether to separately extract its audio, key frames, facial images and background footage.

Nearby, data analyst Kim Ma-ru mapped where, when and by whom fake materials had been distributed, helping Ms Choi’s team detect dubious content more quickly.

‘Whack-a-mole’

The local polls are the third major ballot in South Korea since an amended law to combat AI-fuelled election falsehoods was passed in 2023.

More than 45 per cent of South Koreans use generative AI, according to government figures. ChatGPT maker OpenAI says the country has the most paid subscribers outside the US.

At the same time, South Koreans consume more low-quality generative content – “AI slop” – than any other country, and reports of false AI-created content rose 27-fold between the general election in 2024 and the presidential campaign the following year.

“It’s an exhausting job that can feel like a (game of) whack-a-mole,” the data analyst told AFP.

“But it’s important work – there’s a sense of civic duty in it.”

AFP has debunked AI-generated election disinformation in South Korea, including a video of the 2025 presidential front runner Lee Jae Myung – now the country’s leader – purportedly faking a hunger strike.

Beyond fake content about candidates, conspiracy theories about vote-rigging in recent years have also dented public trust in elections.

Jailed ex-president Yoon Suk Yeol sent hundreds of armed troops to the NEC during his short-lived bid to impose martial law in late 2024, repeating widely disproven far-right claims of vote hacking.

On the street outside the office, pro-Yoon protesters have hung a banner reading: “Investigate the rigged elections immediately!”

Both Ms Choi and data analyst Kim declined to be photographed or filmed, citing growing threats and online bullying targeting election workers.

The South Korean government has hired hundreds of staff to track and counter manipulated content ahead of local ballots on June 3.

PHOTO: AFP

Strict laws

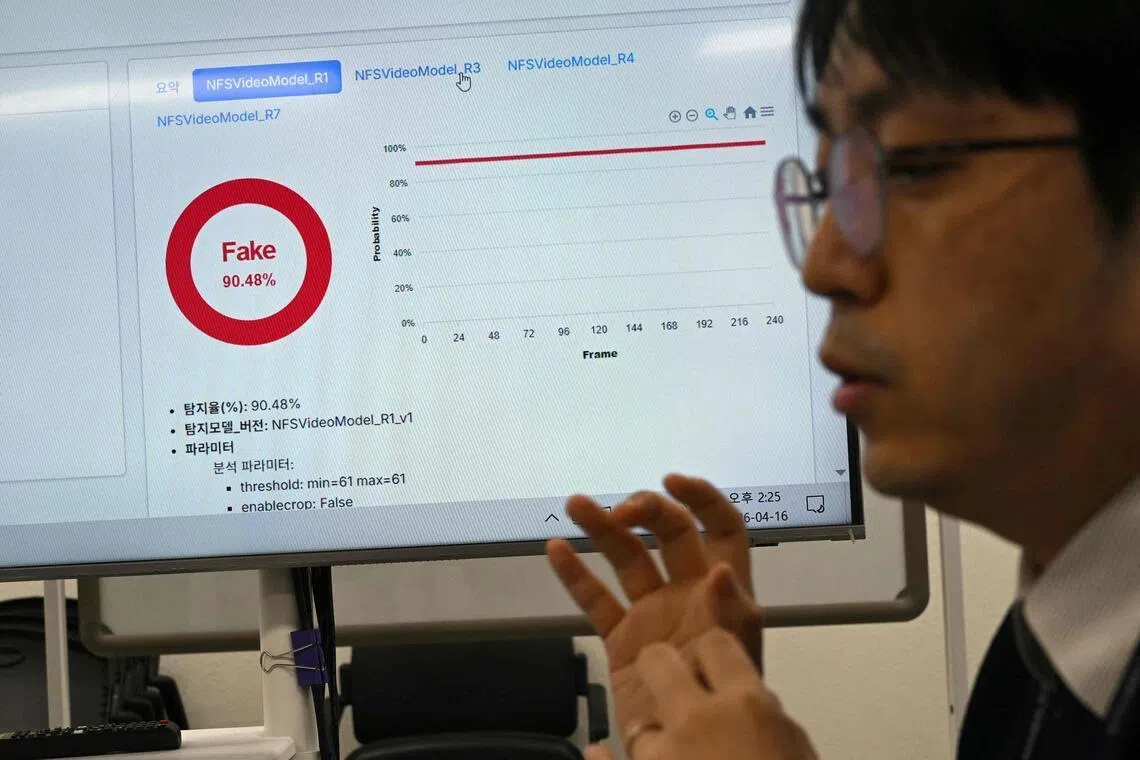

“In such a short time, it has become so difficult for voters to tell what is real and what is not,” said Mr Jung Hui-hun, a digital forensic specialist at the NEC’s cyber investigations unit, as he ran videos through state-developed software tools to detect AI imagery.

Officials say the programmes are about 92 per cent accurate, with human experts reviewing the most sophisticated material.

Once confirmed, the authorities demand that either the poster or the platform remove the content for violating the 2023 law, which bans AI material that involves candidates and looks realistic enough to confuse voters in the three months before a poll.

Repeat offenders, or those who create content deemed particularly harmful, can face up to seven years in jail or a maximum fine of 50 million won (S$43,600).

“The rules may seem excessive to those outside South Korea, especially in places like the US that highly prioritise freedom of expression,” Dr Kim Myuhng-joo, director of the Korea AI Safety Institute, told AFP.

But as swiftly as South Koreans embraced AI, many grew aware of its dangers, Dr Kim said, citing the election conspiracy theories and a public scandal around deepfake pornography targeting women and girls.

“Public consensus has formed that we need tough regulations over the use of AI when it comes to election transparency,” Dr Kim said.

A survey last year showed 75 per cent of South Koreans believed AI-generated content could sway election results, and nearly 80 per cent supported stronger efforts to detect and punish its use.

Mr Jung, the digital forensic specialist, acknowledged the country’s response had “many limits” but voiced hope it would spur debate on how to tackle AI-fuelled disinformation.

“We’re still trying to figure out what is the best solution... but I think we are moving forward – slowly but surely,” he said. AFP