DETROIT • The race by carmakers and technology firms to develop self-driving cars has been fuelled by the belief that computers can operate a vehicle more safely than human drivers.

But that view is now in question after the revelation on Thursday that the driver of a Tesla Model S electric sedan was killed in a crash when the car was in self-driving mode.

Federal regulators, who are in the early stages of setting guidelines for autonomous vehicles, have opened a formal investigation into the accident, which occurred on May 7 in Williston, Florida.

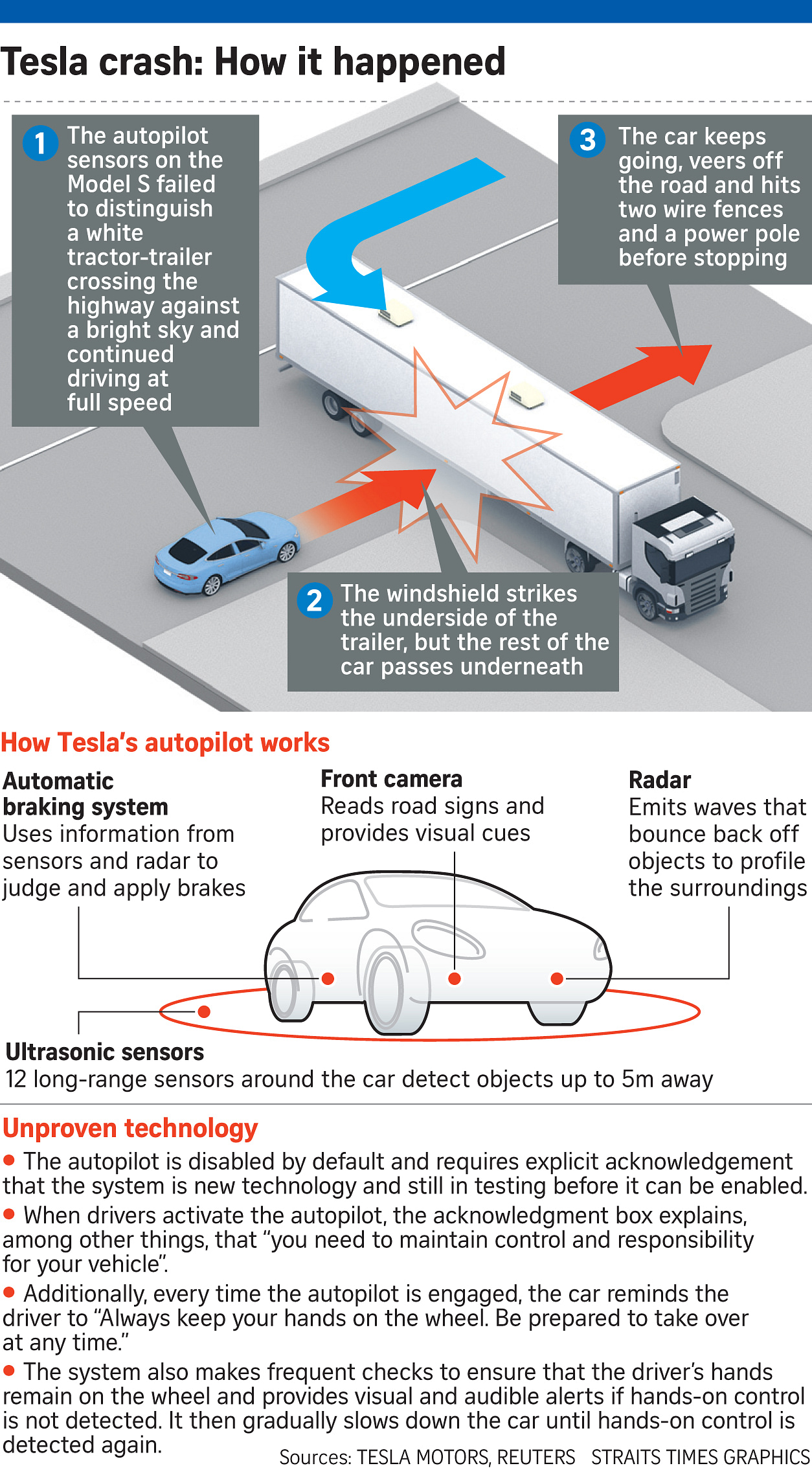

In a statement, the United States' National Highway Traffic Safety Administration said preliminary reports indicated that the crash occurred when a tractor-trailer made a left turn in front of the Tesla, which failed to apply the brakes.

It is the first known fatal accident involving a vehicle being driven by itself by means of sophisticated software, sensors, cameras and radar.

The safety agency did not name the Tesla driver who was killed. But the Florida Highway Patrol said he was Mr Joshua Brown, 40, of Canton, Ohio. The navy veteran owned a technology consulting firm.

Mr Brown had posted videos of himself riding in autopilot mode on social media. "The car's doing it all itself," he said in one, smiling as he took his hands from the steering wheel. In another, he praised the system for saving him from an accident.

The death is a blow to Tesla at a time when it is pushing to expand its line-up from pricey electric vehicles to more mainstream models.

The company on Thursday declined to say whether the technology, the driver or both were at fault in the accident. It said in a statement: "Neither autopilot nor the driver noticed the white side of the tractor-trailer against a brightly lit sky, so the brake was not applied.

"The high ride height of the trailer, combined with its positioning across the road and the extremely rare circumstances of the impact, caused the Model S to pass under the trailer, with the bottom of the trailer impacting the windshield of the Model S."

Even as companies like Tesla, GM and Google conduct tests on autonomous vehicles at both private facilities and on public highways, the crash casts doubt on whether autonomous vehicles in general can consistently make split-second, life-or- death decisions on the highway.

The safety agency said it is working with the Florida Highway Patrol in the inquiry, and that the opening of the investigation does not mean it thinks there was a vehicle defect.

A new set of guidelines and regulations regarding the testing of self- driving vehicles on public roads is expected to be released this month.

At a recent technology conference in Novi, Michigan, the agency's leader Mark Rosekind said self-driving cars should at least be twice as safe as human drivers to result in a significant reduction in roadway deaths.

"We need to start with two times better," Mr Rosekind said. "We need to set a higher bar if we expect safety to actually be a benefit here."

In the past, Tesla chief executive Elon Musk praised the self-driving feature, introduced in the Model S last autumn, as "probably better than a person right now".

But on Thursday, the company cautioned that it was still a test feature and its use "requires explicit acknowledgment that the system is new technology".

NEW YORK TIMES